Modern Data Platform

The foundational data tools you need in one platform

A modern data platform or modern data stack is virtually a requirement for any business that wants to foster a data-driven culture and leverage its data for competitive advantage.

To address the terminology, data stack and data platform are often used as interchangeable terms although as the functionality evolves, technical distinctions are emerging.

This post is built on the foundational understanding that the modern data stack includes an ETL tool, a data warehouse, and a data transformation layer. Mozart Data is a data stack solution that selects the best tools and offers them as a cohesive stack, although companies can elect to choose their own tools and create their own custom stack.

A modern data platform goes beyond the functionality of the data stack and it is this drive to expand our toolset that puts Mozart Data (as well as some other companies) into the modern data platform category. For instance, instead of adding a data lineage/observability/task management feature to your data stack on your own that you have to manage, such as Airflow, we have those features included. The Mozart Data platform also offers alerting features that are not standard in a data stack. For instance, users can set data alerts to know when data has met certain conditions, to check for specific problems in the data, like missing fields, or for a business case, such as inventory reaching a certain level.

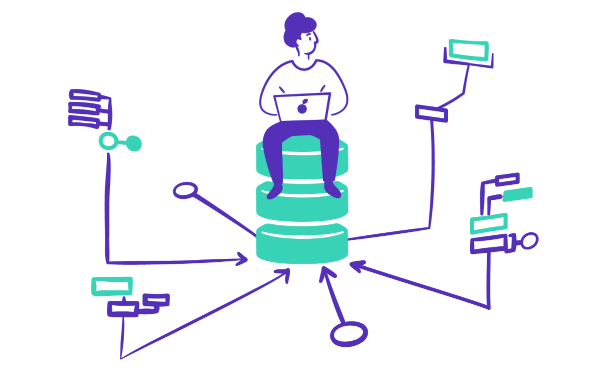

The uses for a modern data platform or data stack are far-reaching. A data platform gives your business centralized, organized, and reliable data, which allows your teams to focus on analysis and data-driven decision-making rather than data management.

The sooner a business implements a reliable data platform, the better. In an early-stage start-up it can be tempting to cut costs and cobble together ad hoc solutions at first, but that can only lead to bigger expenses down the line when you do need to make the jump to a better solution. The more data you collect, the bigger tangle you’ll create and the longer and more expensive it will be to untangle.

When considering setting up a data platform, the platform architecture is integral in ensuring that data is properly collected, secured, and stored.

While there are no universally agreed upon modern data architecture principles, the three that our partners at Snowflake have outlined are worth keeping in mind:

Consider data a shared resource: to create a data-driven culture, it’s critical that all teams have access to the data your business is generating every day. Accessible data means not only that proper permissions are in place, but also that the data is available in a way that makes it actually useable to everyone, which can often mean ready for data visualization

Ensure security and control access: data security and data governance are an integral part of any reasonable data platform architecture. How data is generated, managed, stored, and deleted all must be carefully thought through and implemented.

Reduce or eliminate data movement and replication: The movement of data from one place to another is not only time-consuming but represents a risk both to data security and data reliability. A modern data platform can help to minimize data movement by keeping your data flowing easily and safely from its raw form through to your business intelligence tool.

Modern data platform architecture relies on the core components of the platform working in harmony with one another.

Modern data platform components will include different layers necessary to turn raw data into actionable business intelligence, including:

Data warehouse or other repository

Extract, transform, and load (ETL) tool

Data transformation

Data cataloging

Data lineage

Data alerts

As alluded to previously, automated testing plays a critical role in ensuring the quality of data. But what is data testing? More specifically, what is ETL automation testing? Data testing is a process used to evaluate the quality of data that is inputted into a system. It is typically used to ensure that data is accurate, complete, and compliant with the system’s requirements.

ETL automation testing, by extension, is a type of data testing that focuses on testing the ETL process. It involves extracting data from a source system, transforming it into a format suitable for the target system, and then loading it into the target system. Data testing automation tools are used to ensure that the data is properly transformed and loaded into the target system and that it is accurate and complete. Users can use their unique knowledge to test for certain outcomes that they know is likely to signal an error. This is where an understanding of the business can make data work much more valuable.

A data warehouse like Snowflake by itself is powerful since it allows you to secure all the data your business creates in one place. However, you need to have the other components of the data platform in order to get the most value out of a warehouse.

An ETL tool is the component that must exist between the raw data you collect and the data warehouse. First, data is extracted from sources, including but not limited to:

Analytics tools (Google Analytics)

Business/HR Management Platforms (Quickbooks, Zero)

CRM (Salesforce, Zendesk, Freshdesk, Intercom, Kustomer)

Digital Marketing (Google Ads)

E-Commerce Platforms (Shopify, Square, Stripe)

Email Marketing Platforms (Klaviyo, Mailchimp, Sendgrid)

Product Management Tools (Airtable, Jira)

Product/Website Analytics (Google Analytics, Heap, Mixpanel)

Spreadsheets (Google Sheets, CSVs)

If there are specific tools that your business needs to function, be sure than any data platform you consider has components that integrate seamlessly with those tools so that data can be extracted cleanly. Something that seems as simple as standardizing naming conventions across different tools would be an extremely heavy lift to do manually but is part and parcel of a data platform’s ETL component.

Extracted data is then transformed, meaning that it is passed through a series of functions that cleanse, map, and change the data into usable forms. An ETL tool is very useful in cases where you know you always want to modify a dataset in the same way as you load it. The ETL tool makes it possible to schedule that transformation without any manual intervention.

On the other hand, a transform tool can be used on data that has already made its way into your data warehouse and is useful when creating unique data views. For example, if you want to take portions of two tables and combine them for analysis and need to map the same user ID across different datasets, a transformation layer will be necessary.

The data transformation functions performed on the data may include:

Joining data from multiple sources

Filtration

Standardization

Validation

Removing unimportant data / deduplication

Sorting of data to improve search performance

Transposition

Data that is clean, deduplicated, and readable is much more accurate and useable than raw data from multiple inputs.

From your data warehouse, reliable data can be loaded into a BI tool or spreadsheet with confidence. A BI tool may or may not be part of a modern data platform. This type of tool is what makes it easy for a wide range of users to see how the business is performing, without the help of a data analyst. Because data in a platform has gone through the transformation process, you can feel confident that the data end users are seeing is accurate and makes up a strong foundation for business decisions.

Keeping data organized is another critical way to make sure that data is as usable as possible. Data cataloging functionality should make it easy for users to set up their own data views and track the data that is most important to them. Not only does data cataloging make it easier to use your collected data, by creating descriptions and tags that make sense to your own company and culture, but it is also much easier to share that data with new hires and onboard more effectively.

If you’re investing in a modern data platform, you already have data coming in from many different sources. Keeping track of data issues manually can be a bit risk, so data platform have alert systems in place that can notify the relevant team when something happens – like a drop in inventory, a revenue goal being met, or something irregular that should be checked before it creates a bigger issue.

A modern data platform can make your business data more reliable and more accessible to more people on every team. If you do not currently have a data solution, it may be tempting to build your own data platform or cobble together a collection of tools to save on the cost of a fully integrated data stack. However, in most cases, particularly for start-ups, this is not recommended. Most companies have somewhat limited resources. Dedicating an expensive engineer to building a data platform solution or finding a collection of data tools to mimic the results of a data platform, is simply not money — or time — well spent.

Data platform companies know what they’re doing and will choose the right tools that play nicely together. When left to choose your own data tools, no matter what your criteria, there is always the chance that integrations will not work properly and you will end up with customer support tickets in multiple places without an easy answer.

If you decide to buy, rather than build or assemble your own big data platform, consider Mozart Data. Mozart Data offers its customers a modern data platform that simplifies the business intelligence architecture process. Connecting your data sources to the Mozart Data stack allows you to have a centralized space to scrub, transform, and organize your data before syncing it with your BI tool. Mozart Data has partnered with Snowflake as our data warehouse provider, since we know Snowflake is secure, flexible, and easy to use.

Another modern data platform example would be Y42. Y42 is a cloud-based data platform that integrates with your company’s existing data warehouse. The Y42 browser interface allows for no-code data queries and includes a built-in BI tool for data visualization, but does not include access to a data warehouse, although it is compatible with both Snowflake and BigQuery.

If you’re ready to learn more, sign up for a demo here!

Resources