Organizations of all sizes need to be able to systematically analyze data in order to benefit from it. Data transformation involves changing the structure and accessibility of raw data to be more organized so you can perform analytics efficiently.

Defining Data Transformation

Data transformation involves changing some of the essential attributes of raw data, such as its format, values, and structure. These changes can be conducted at two different stages in the data pipeline.

In the past, many enterprises transformed data before loading it into their data warehouse because storage costs were much higher than they are now. Today, many businesses just use the cloud for data warehousing, so they can scale computing resources and skip pre-load transformation. Raw data is loaded into the warehouse and transformed according to the preferences and queries of your data analysts. This is the last stage of a data transfer process known as Extract, Load and Transform, also known as ELT.

Organizations have the option to use several different ETL / ELT tools to automate data transformation these days. Data engineers and other professionals may also use scripting languages like Python to transform data, but of course, their time is valuable (and expensive). Languages such as SQL, that are domain specific, may also be used for this purpose.

Part of the reason we created Mozart Data is to provide a central environment in the cloud where the entire ETL/ELT process can take place. In fact, we actually refer to our own process as ETLT because we allow you to transform your data before and after the loading process if that suits your needs. More flexibility means more power in data analysis, and Mozart gives you more options for how you transfer and analyze your data than any other tool on the market.

In any of the processes that use it, data transformation may be aesthetic, structural, constructive, or destructive:

-

Aesthetic transformation involves stylistic changes, such as standardizing street names or other values.

-

Structural transformation involves combining and/or moving columns.

-

Constructive transformation is where raw data is added, copied, and replicated to fill gaps and standardize the data.

-

Destructive transformation is where entire fields and records may be deleted to clean the data and make it more useful.

Why Is Data Transformation Important?

Data transformation is important because it organizes data to enable your business to analyze and use it more easily. Imagine data as objects that are coming at you from several different directions. While all of the objects may have value, you’ll only be able to fully realize that value when all of them are organized in a way that makes the different groupings within them easy to understand and interpret.

Both humans and computers are able to utilize data better when it’s organized. In addition, once data has been validated and properly formatted, its quality improves. Any reports that are generated from it are more likely to be accurate and can be reliably used to guide your company in the right direction.

When data hasn’t been transformed, there’s always a risk that errors could be introduced into the reports that are generated from it. For example, during data transformation, some records like unexpected duplicates that could skew your results can be deleted, improving the accuracy of the insights that your data analysts discover.

Data transformation protects your applications from null values and other problems that could slow down the progress of a query or cause more serious problems. It also improves compatibility between systems that are using different types of data. That speeds up all of your processes, since there are fewer delays that would be caused by one application trying to decipher what the other is asking.

Sometimes the same data set needs to be used for more than one purpose. For each of those uses, it might be best to have the data in a specific format. Data transformation changes the data into appropriate formats that are the best fit for each of them.

How Does Data Transformation Work?

Data transformation helps to clean data for better analysis and decision making. After data is extracted from your data sources, the transformation process turns it into a more usable form to make it easier to analyze. However, every business has to decide which process works best for them. It’s important to use a method of data transformation that you can commit to for the long haul.

Data transformation usually starts with the conversion of data types, so that systems and applications can handle it more easily. Hierarchical data should also be flattened in the first phase – that is, relationships between data where hierarchical relationships exist or where one data item is thought of as the parent of another data item will have to be adjusted to improve the speed of communication and processing.

After the first phase, your data will be compatible with most systems for most use-cases. This means that you can conduct most kinds of analysis without too many problems. Your analysts and engineers can add more transformations as necessary in order to be able to understand your data better. These are known as individual layers of processing, and they allow your team to modify the data to be a better fit for the unique needs of the query that is being run.

For example, sometimes that dataset might need to be adapted before your analysis can yield a particular insight. It might be necessary to revisit your transformations, add more data, or restructure the way the data is formatted. That really depends on what you’re trying to learn from the data, but your data engineers should be able to transform the data to fit that task.

Mapping

Translation and mapping are two of the most common types of data transformation. It links the elements in a set of data to another set which can allow the user to better understand the original dataset. For example, suppose you run an auto repair business and a technician is looking at a column with codes that represent different types of vehicle errors. This could be mapped to another set of data that explains what type of problem each code represents. Of course, as you combine different sets of data, it gets more difficult for any one person to understand it, but that’s where business intelligence tools come in.

Combining data from multiple sources through translation and mapping makes it a lot easier to draw conclusions from your data. To stick with our metaphor, combining different error codes from a car with a database of historical issues can help auto technicians rapidly identify what’s wrong with a customer’s car. They may know what individual codes mean, but combining data sources can provide unique conclusions more difficult than any one person could draw from the data. This type of data transformation is very useful in any case where multiple customer-facing applications are being employed by your business.

Summarization And filtering

Data transformation is all about making data more manageable. Most companies will frequently be pulling the same type of data at regular times from the same sources. You may not need to include all of that in your analysis just as it is. Irrelevant data will only take up space and hinder your system’s ability to be as agile as it should be. It takes a lot of processing power to analyze this much data at scale.

To solve this problem, you can reduce the amount of data that you have to work with without affecting the quality of the data or changing the picture that you would get from it. You can filter out fields, columns, and records that are unnecessary for the analysis you’re running at the time. This consolidates your data, so it becomes easier for your system to analyze.

Sometimes data may not be relevant to a particular query or might not be needed for a particular study. For example, if you run a delivery business and want data on the number of customers who purchase a particular SKU, you might not really care about the size of the packages that they purchase. That data is irrelevant, so you could omit it. However, you probably care a lot about how often they order it and how many they order at one time.

Anonymization And Encryption

Sometimes, the data your business collects might contain personally identifiable information. For example, lots of medical or health-adjacent businesses (ie: direct to consumer medications or an exercise app) will have data that would allow anyone to link a patient’s identity with their private health info. Legal issues aside, that’s risky personal data that’s probably not of any interest to your data team.

Any information that could compromise privacy or security should be anonymized. In many industries, data encryption is required by law. Systems can encrypt your data at several different levels, ensuring that you meet the quality standards for your sector.

Considering the highly publicized data breaches over the last few years, and the general public’s increased awareness of the risk that data leaks and identity theft pose to them, this process is more important than ever. The reality is that it’s almost impossible to truly secure data while it’s connected to the web, but anonymizing data from the start can keep you and your customers safe.

By scrubbing personal data from your data as soon as you take it in, you’re able to take most of the risk out of private information being leaked or stolen from your company. Anonymize your data early and often.

Enrichment and substitution

There are many cases in which you can save space and perform an analysis more effectively if you merge data from different sources. This is a critical step for creating enriched information.

For example, suppose your business has customers who purchase products from your physical stores, online, and via various other channels. If you want to understand how much each customer really spends with your business per month, it’s not really helpful to view all of these transactions individually. Instead, you’ll benefit by combining all of these transactions so you have a single figure to work with.

If you add this to a customer information chart, it’ll help you save storage space (and cost) while allowing you to make predictions on sales for the coming year effortlessly.

For data substitution, there are a number of situations where you might want to automatically alter or substitute the values in your data. One such example might be standardizing names, such as turning every instance of Bob Smith in a database into Robert Smith to make sure the value is standardized across the data set.

Another example might be replacing or scrambling values automatically to help with encryption. While you retain the key to decode it, if the data is stolen or misplaced, it would be indecipherable to a hacker.

What Are the Difficulties That Are Sometimes Associated With Data Transformation?

While data transformation is important for organizations of all sizes, there are a few reasons why it can be challenging. One is the cost. Data transformation can be expensive, especially if you’ve hired a data engineer, and the infrastructure, tools, or software that are used in the process will all impact your budget as well. You may also have expenses that are related to licensing, hiring additional personnel, or obtaining adequate computing resources (Fortunately for you, Mozart can help consolidate these tools and lower the cost of cleaning your data.)

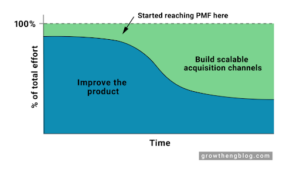

Data transformation consumes a great deal of resources, especially if you use ETL. In order to cut down on the lag that results from this use of computing power, many businesses opt for cloud-based data warehousing. Many of them switch to ELT, which allows them to use their computing resources in a more dynamic way and speed up their queries.

While data transformation usually improves the quality of data, it’s not hard to introduce even more issues into the data during the transformation process if you don’t have the right skills. If your data analysts are not experts in the subject matter, they might not notice incorrect data because they’re less familiar with what accurate values should look like.

Sometimes businesses perform data transformations that are unnecessary, only realizing later on that those were not in line with their needs. For example, in some sectors, it may be better to present data in one particular format, but if the data is changed to this format automatically for a business for another project, they may have to change it back again or modify it to something else before it can be properly utilized.

The short version is that data transformation is critical to create a dataset that will give you accurate results, but there are lots of wrinkles along the way. You need to work with transformation experts and platforms, like the people and tools found here at Mozart Data, to make sure you’re setting up your data correctly. Contact us to learn more about how Mozart can help you make sense of your data.